Dropbox LAN Sync is a feature that allows you to download files from other computers on your network, saving time and bandwidth compared to downloading them from Dropbox servers.

Imagine that you are at home or at your office, and someone on the same network as you adds a file to a shared folder that you are a part of. Without LAN Sync, their computer would upload the file to Dropbox, and then you would download the file from Dropbox. With LAN Sync, you can download the file straight from their computer. Since you are on the same network, this transfer will be much faster and will save you bandwidth.

As the number of companies and offices using Dropbox has increased, the use cases for LAN Sync have grown, and the feature was recently rewritten and improved. Here’s a look inside how it works.

Blocks and Namespaces

First, we need to talk about some of the abstractions that Dropbox uses internally, namely blocks and namespaces. If you’ve read other articles on the tech blog, you may already be familiar with these.

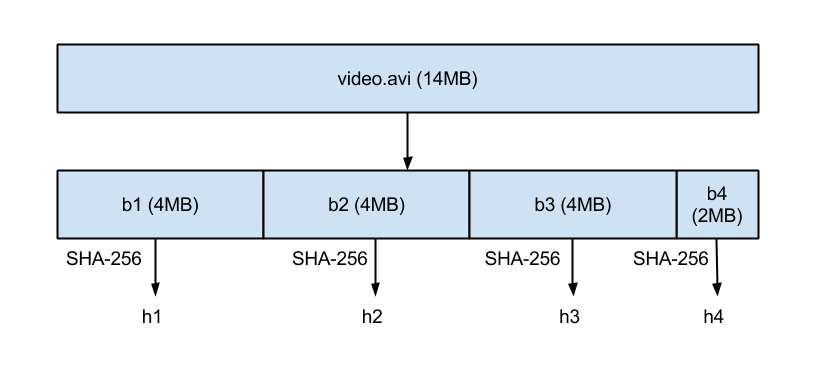

Files in Dropbox are split up into 4MB blocks, where the last block is smaller when the file size is not evenly divisible by 4MB. Each block is addressed by the SHA-256 hash of its contents. A file, then, can be described by the list of hashes of blocks that make it up.

Here, the file could be described as 'h1,h2,h3,h4', where h1, h2, etc. are the hashes for their respective blocks.

Namespaces are the primitive behind Dropbox's permissions model. They can be thought of as a directory with specific permissions. Every account has a namespace which represents its personal Dropbox account and all the files in it. In addition, shared folders are namespaces which multiple accounts can have access to.

Where does LAN Sync Fit In?

The key takeaway from the last section is that file download requests can be thought of a series of (hash, namespace) requests, indicating to download the given block, authenticated as the namespace. Without LAN Sync, these requests would be queued up and sent to the block server, which would return block data. This is a nice abstraction, because this way the block download pipeline does not need to know about filenames or how the blocks fit together.

This also means that LAN Sync only syncs actual file data, and not file metadata, such as filenames. By ensuring that all metadata is received from Dropbox’s servers, we can ensure that everyone is always in a consistent state.

With LAN Sync, we try to download blocks directly from peers on the LAN first, using block server only if that fails. Given a block and a namespace, the interface to LAN Sync either returns the block or an indicator that it was not found.

ARCHITECTURE

There are three main components of the LAN Sync system that run on the desktop app: the discovery engine, the server, and the client. The discovery engine is responsible for finding machines on the network that we can sync with (i.e., machines which have access to namespaces in common with ours). The server handles requests from other machines on the network, serving requested block data. The client is responsible for trying to request blocks from the network.

DISCOVERY ENGINE

The first challenge about LAN Sync is finding other machines on the LAN to sync with. To do this, each machine periodically sends and listens for UDP broadcast packets over port 17500 (which is reserved by IANA for LAN Sync). These packets contain:

- The version of the protocol used by that computer

- The namespaces supported

- The TCP port that they are running the server on (17500 is reserved, but that is no guarantee that it will be available, so we may bind to a different port)

- A random identifier. We could identify requests by IP address, but it is hard to avoid connecting to ourselves or to avoid seeing the same peer twice just using that (we may get their packets over multiple interfaces, for example).

When a packet is seen, we add the IP address to a list for each namespace, indicating a potential target.

PROTOCOL

The actual block transfer is done over HTTPS. Each computer runs an HTTPS server with endpoints of the form '/blocks/[namespace_id]/[block_hash]'. It supports the methods GET and HEAD. HEAD is used for checking if the block exists (200 means yes, 404 means no), and GET will actually retrieve the block. HEAD is useful in that it allows us to poll multiple peers to see if they have the block, but only download it from one of them.

Because Dropbox aims to keep all of your data safe, we want to make sure that only clients authenticated for a given namespace can request blocks. We also want to make sure that computers cannot pretend to be servers for namespaces that they do not control. The concern here is not that they might try to give you bad data (we can check for that by ensuring that it hashes to the right thing) but rather that they might be able to learn something by watching which block hashes you request.

The solution to this is to use SSL in a creative way. We generate SSL an key/certificate pairs for every namespace. These are distributed from Dropbox servers to user’s computers which are authenticated for the namespace. These are rotated any time membership changes e.g., when someone is removed from a shared folder. We can require both ends of the HTTPS connection to authenticate with the same certificate (the certificate for the namespace). This proves that both ends of the connection are authenticated.

One interesting problem: when making a connection, how do we tell the server which namespace we are trying to connect for? For this, we use [Server Name Indication (SNI)], so that the server knows which certificate to use.

Note that in this diagram, the HEAD request seems useless, but when there is more than one peer, we need to avoid downloading block data twice. Also, the key distribution happens when the computer comes online, not only when using LAN Sync.

SERVER/CLIENT

Given this protocol, the server is not complicated. It just needs to know which blocks are present and where to find them.

The client maintains a list of peers for each namespace (this list comes from the discovery engine). When the LAN Sync system gets a request to download a block, it sends a HEAD request to a random sample of the peers that it has discovered for the namespace, and then requests the block from the first one that responds saying it has the block.

One important optimization to avoid the latency of an SSL handshake each time we need a block is to use connection pools to allow us to reuse already-started connections. We don’t open a connection until it is needed, and once it is open we keep it alive in case we need it again. Designing these pools was a good exercise in concurrency, since they needed to be able to give out connections or have them released back into the pool or be shut down when the connection died, all while being accessed from multiple threads.

Of course, the most important thing is getting your files to you quickly, and we wouldn’t want a slow computer or connection on your network to slow things down. To this end, we have a fairly aggressive timeout on how long we are willing to wait before falling back to the block server. In addition, we limit the number of connections we are willing to make to any single peer, and how many peers we are willing to ask for a block. We made an effort to tune these parameters to work well, and they can be controlled by Dropbox’s servers if needed.

Assuming that the block was found and downloaded successfully, we have successfully downloaded the block! Otherwise, we will try getting the block from Dropbox’s block server, as we normally do without LAN Sync.

Can I Use It?

LAN Sync is currently a part of the Dropbox app! It can be toggled from the Network tab of the preferences page. Because it is designed to be transparent to the user, you may have not even noticed it while it was handling your syncing.

If you want to force a LAN Sync, you’ll need two computers on the network with either the same account or a shared folder in common. Add a file to one of the computers, and the other computer should attempt a LAN Sync.

If you’re passionate about sync internals and performance you should check out our jobs page. We’re hiring.